Autonomous Self-Landing UAV

Vision-Based Autonomous Landing System Using YOLO and Raspberry Pi

Project Gallery

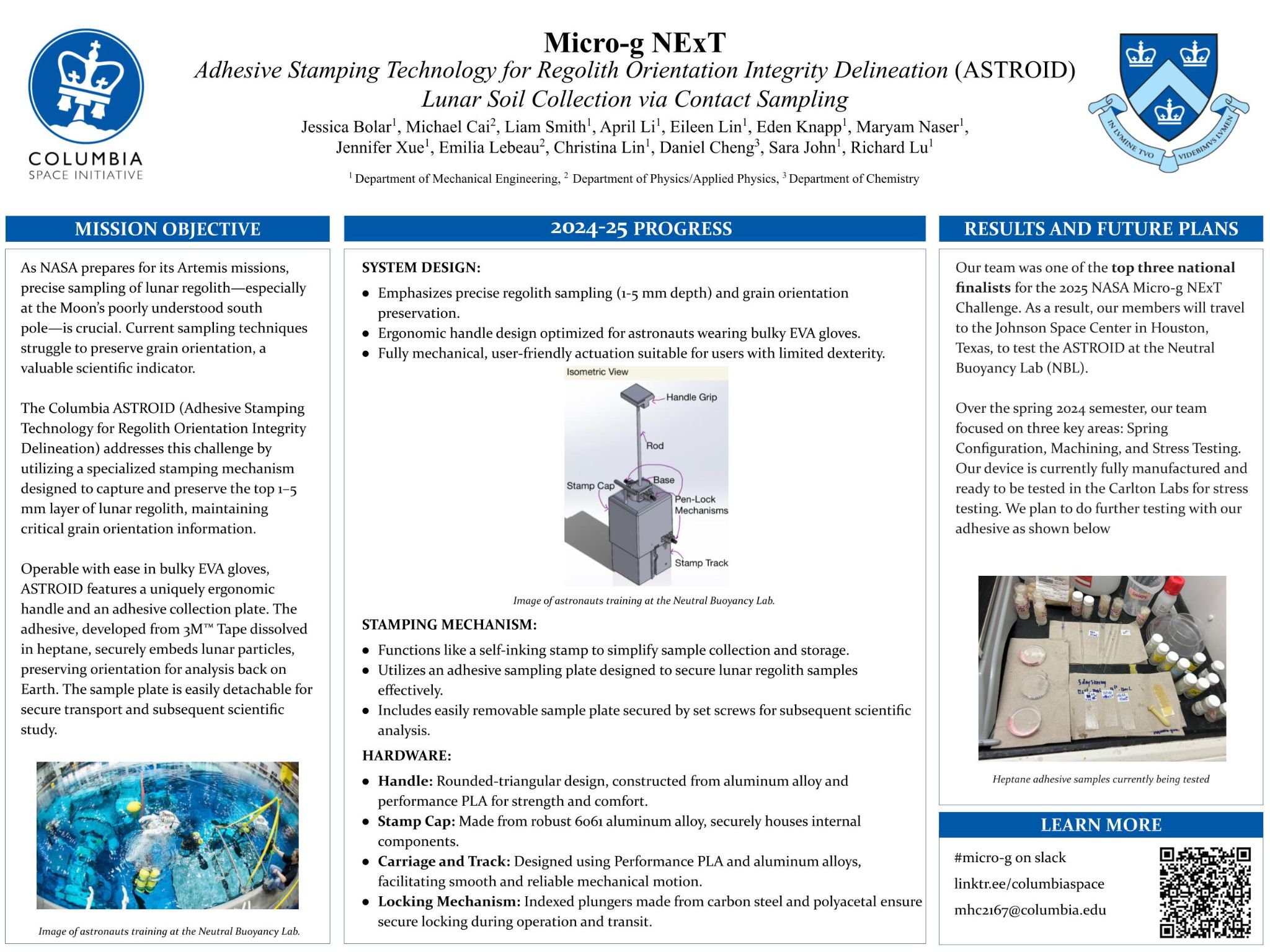

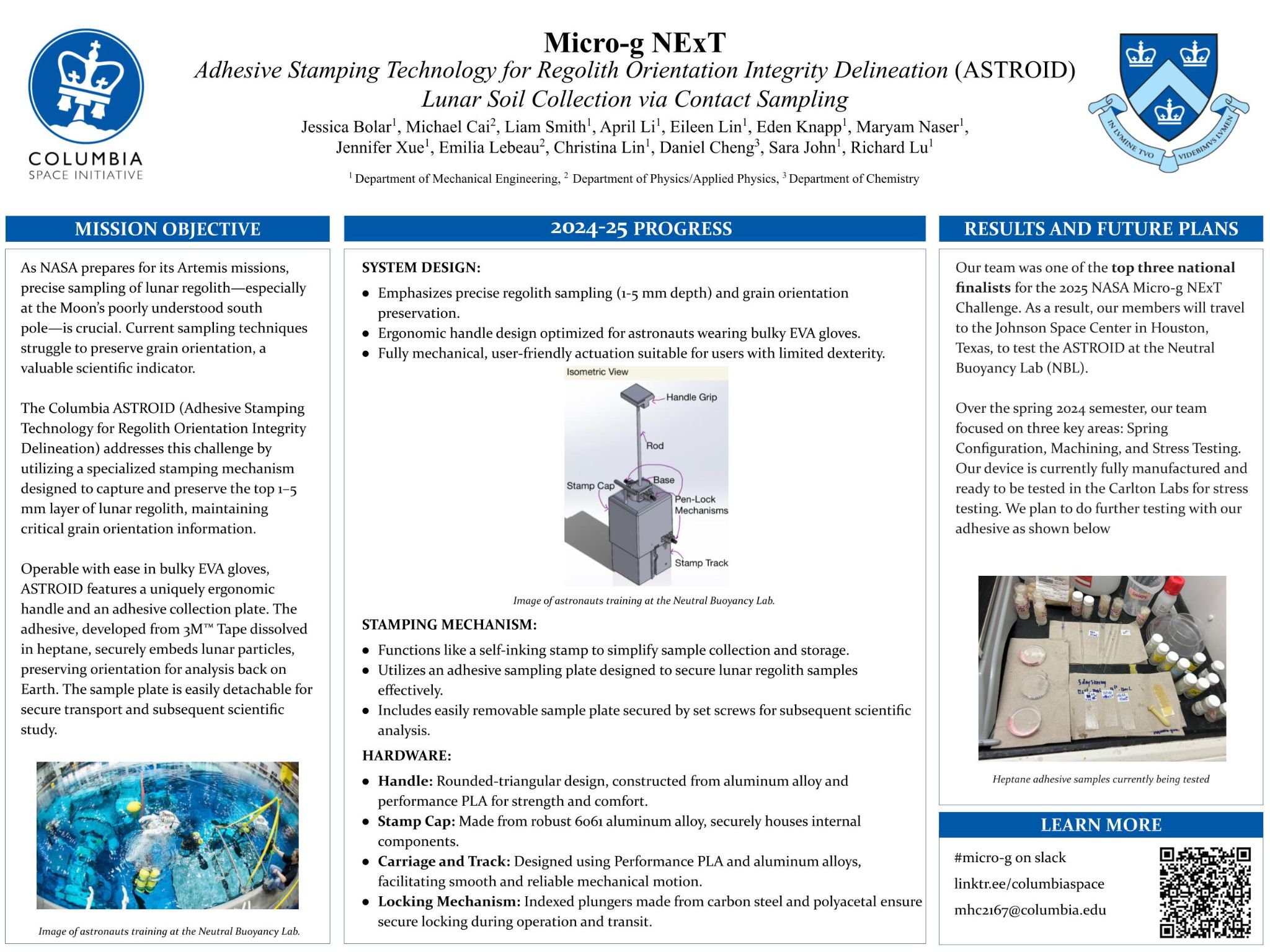

Labelled diagram of the ASTROID device

Me at the NBL control panel observing the test run-through along with the rest of CSI's team.

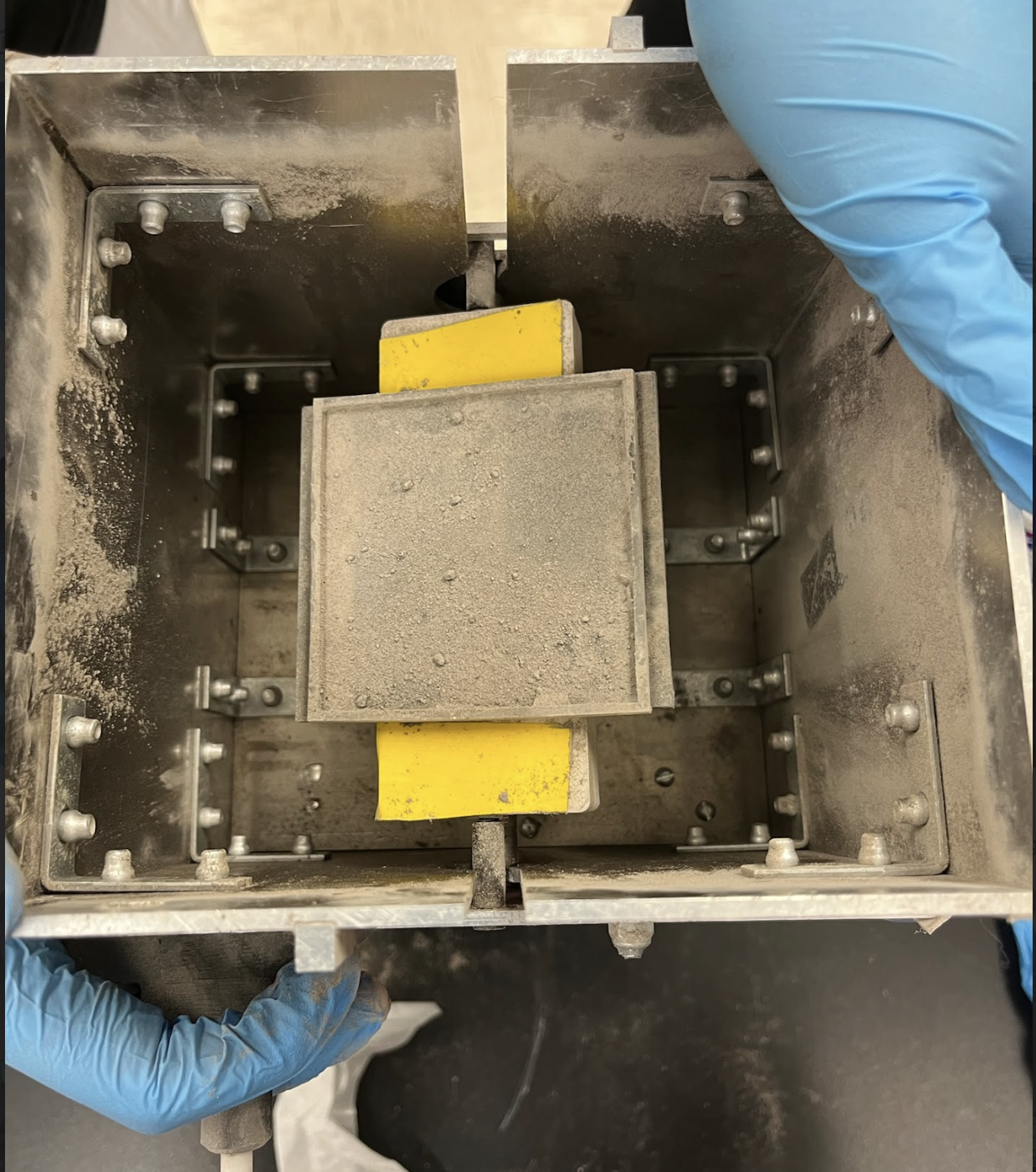

Photograph of ASTROID device after testing in the Lunar Simulant Lab

Research poster for presentation

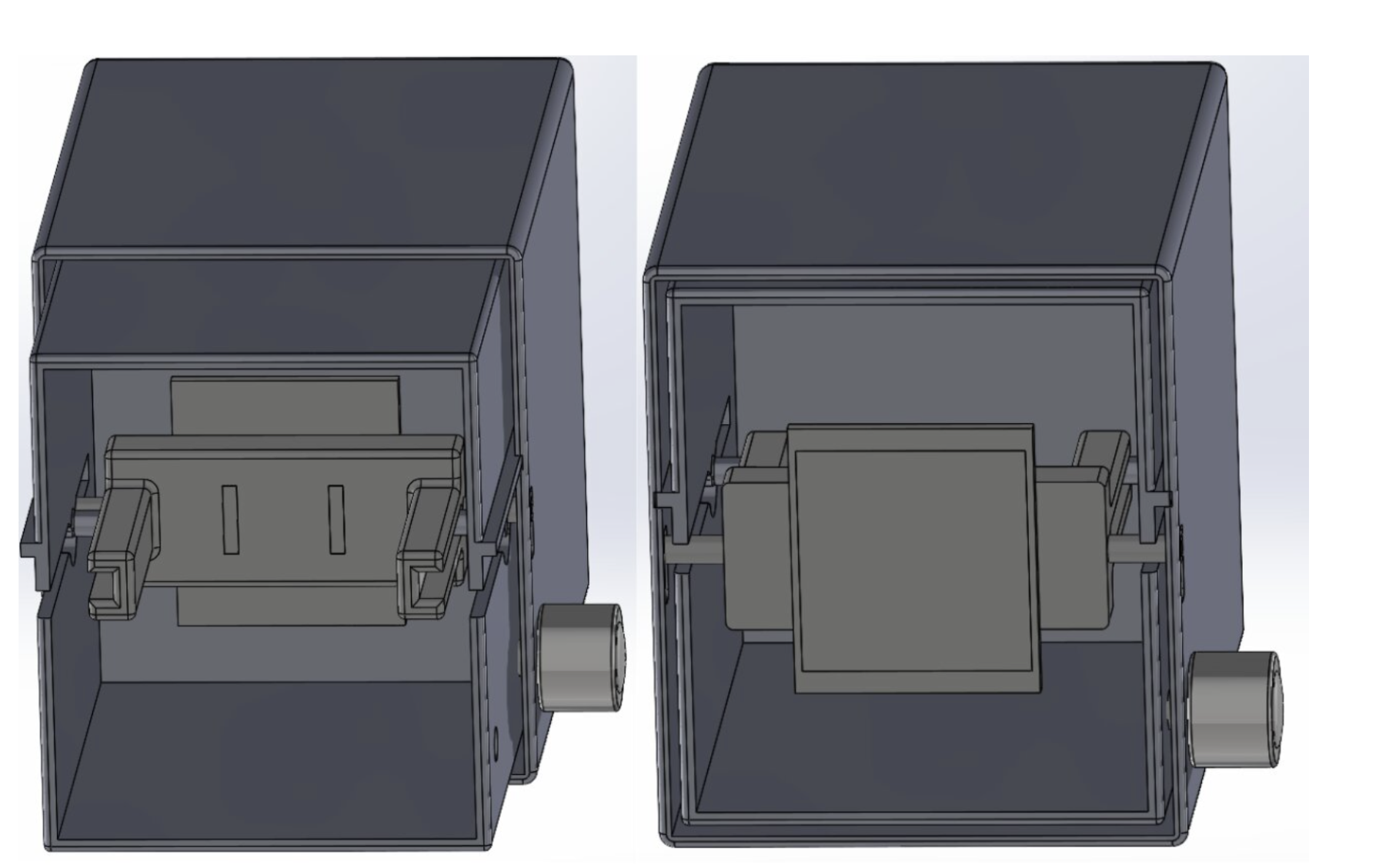

SOLIDWORKS model showing ASTROID device functionality

Video showing ASTROID functionality, as tested by me

General Overview

This summer, I set out to build a model airplane capable of landing itself autonomously using only visual guidance and an ultrasonic distance sensor to measure ground-level altitude. My goal was to create a resilient system that could land from any configuration on a strip of land that can function as a runway.

This project represents the intersection of computer vision, machine learning, mechatronics, and aeronautical response. While I am still currently training my models and attempting to get better recognition and guidance as I try and resolve the legality of my plans, I'm excited about where this project might continue. Additionally, this was perhaps the first project where I've used AI to generate the framework of my code base.

Technologies and Skills Used

System Architecture

Vision System (Raspberry Pi + YOLO): The Raspberry Pi processes visual signals and determines long-term flight commands. It runs a customized YOLO visual recognition model with Kalman filters paired with intertial navigationtrained to identify runways, calculate distances, and detect obstacles reliably. The YOLO model processes video frames in real-time to extract critical landing information including runway orientation, distance, and approach angle.

Primary Flight Controller (ESP32): The ESP32 microcontroller acts as the primary flight controller, receiving commands from the Raspberry Pi and translating them into servo movements and throttle adjustments. Additionally, it handles flight stabilization and

Safety Backup (Arduino): An Arduino microcontroller serves as a conduit for signals that can enable or disable transmitter and Pi-issues signals. It provides basic stabilization and emergency landing capabilities, ensuring the aircraft can be recovered even if the vision or ESP32 systems malfunction.

Development Progress

- Programmed initial YOLO visual recognition model for runway detection and identification

- Integrated Raspberry Pi hardware with camera system for real-time image capture

- Configured ESP32 as flight controller with servo and throttle control capabilities

- Conducted testing with real runway images to validate accuracy and object detection

- Created mockup integration of system components within test aircraft for CG and mass purposes

Current Challenges

Processing Speed Optimization: The primary challenge is refining the YOLO model and decision framework to operate on a timescale workable for high-speed flight. Model airplanes approach and land at speeds of 20-40 mph, requiring processing and decision cycles measured in milliseconds. Current work focuses on model optimization, frame rate management, and predictive algorithms that can anticipate required control inputs.

Real-Time Decision Making: Translating vision data into flight control commands requires sophisticated decision algorithms that can handle varying lighting conditions, wind effects, and unexpected obstacles.

Hardware Integration: Integrating multiple processors (Raspberry Pi, ESP32, Arduino) with sensors, servos, and power systems within the confined space of the model I am using (A Cirrus SR22 1.4m scale model). Weight distribution and power management are sticking points.

YOLO Visual Recognition Model

YOLO (You Only Look Once) is a state-of-the-art real-time object detection system. Unlike traditional computer vision approaches that require multiple passes over an image, YOLO analyzes the entire image in a single pass, making it ideal for applications requiring fast, real-time performance like autonomous flight.

Training Process: The model is being trained on a custom dataset of runway images captured from various angles, distances, and lighting conditions. Training includes normal runways, emergency landing strips, and various ground markings to ensure robust detection capability across different scenarios.

Output Data: The trained model outputs runway bounding boxes, confidence scores, distance estimates, and orientation angles. This data feeds into the decision framework which calculates required heading adjustments, descent rates, and throttle settings for a safe landing approach.

Decision Framework Architecture

- State machine architecture tracking flight phases: search, approach, final, flare, touchdown

- PID controllers for stable heading and altitude control integrated via ArduPilot

- Kalman filtering to smooth noisy vision data and predict future positions

- Risk assessment algorithms that evaluate landing safety and can trigger go-around

- Wind estimation using ground speed calculations and drift compensation

- Energy management ensuring sufficient altitude and airspeed throughout approach

- Failsafe logic monitoring system health and triggering backup systems if needed

Testing Methodology

Benchtop Testing: Currently testing the vision system and decision framework using pre-recorded runway footage and simulated flight data. This allows rapid iteration without risk to aircraft hardware. Accuracy and response time measurements inform optimization efforts.

Ground Testing: Next, I will likely test it in the air with manual landing and record system behavior and compare it to my own behavior.

Flight Testing Plan: Full flight testing will likely not happen due to legality issues of autonomous UAVs

Skills Being Developed

- Machine learning model training and optimization for embedded systems

- Real-time computer vision programming and image processing

- Embedded systems integration with multiple microcontrollers

- Flight control algorithms and PID tuning

- Sensor fusion and state estimation techniques

- System architecture design for safety-critical applications

- Aerodynamic considerations for autonomous flight

- Systematic testing and debugging of complex integrated systems

Timeline & Milestones

Summer 2025: Project initiation, YOLO model development, hardware procurement and initial integration.

Fall 2025 - Spring 2026: Model training and optimization.

Summer 2026: Flight testing without control authority to measure performance in a real setting.

Expected Achievement: Crossing my fingers for a safe flight! (Though legally this will never occur)

Future Enhancements

- Obstacle detection and avoidance during approach

- Emergency landing site selection when runway unavailable

- Full autonomous flight including takeoff, navigation, and landing

Broader Applications

I intend for this project to serve as a stepping stone to a future larger project that could assist GA pilots landing on unfinished runways. Another potential future project is to use this visual guidance technology to create a glider able to autonomously loiter.